Part 1 of our roundup featuring the latest and greatest AI advancements and directions from 2020. Today we cover AlphaFold’s biology breakthrough, controllable tennis videos and causality touted as the next step for AI.

AlphaFold’s Winning Protein-structure Prediction Competition CASP By a Mile is AI’s Biggest Breakthrough this Year

AlphaFold2, an AI program developed by DeepMind, completely blew away the competition in a protein-structure prediction competition, CASP, so much so, the co-founder of the competition has commented that “in some sense” the 50-year old problem, is solved.

See our article on AlphaFold2 - 10 Things You Should Know About Biology’s ImageNet Moment for a quick rundown on what AlphaFold has achieved.

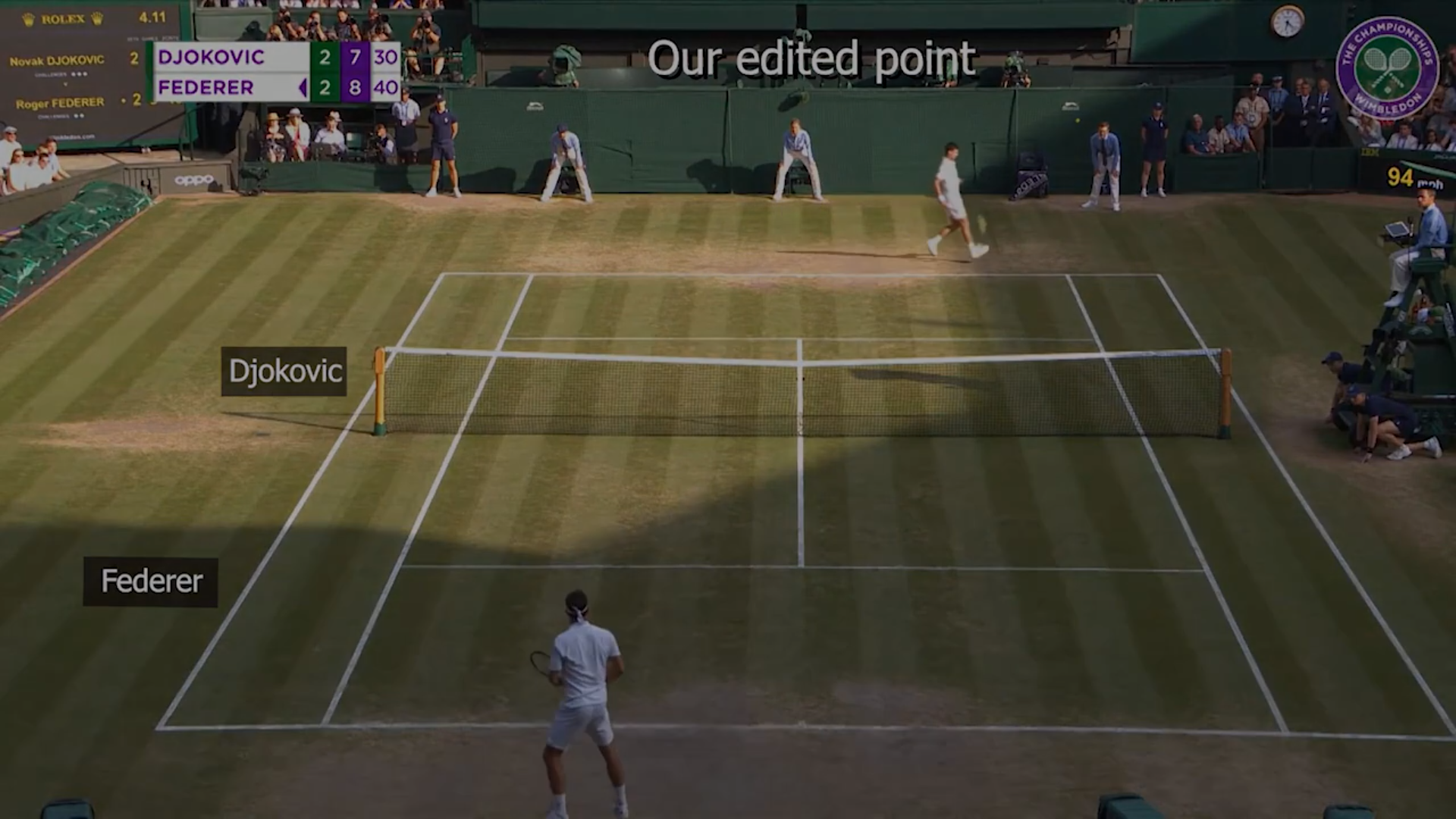

Researchers from Stanford Created A Controllable Synthetic Video Version of Wimbledon Tennis Matches

Ever wanted to climb into the television and play as Nadal or Federer for the Wimbledon Finals? Do you enjoy how realistic the graphics and gameplay from Fifa, Madden or NBA Live is but how far behind Virtua Tennis is?

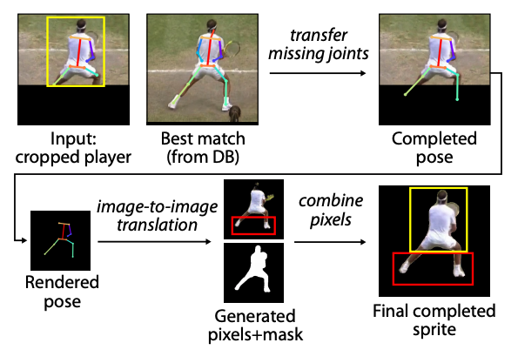

Researchers from Stanford combined a model of player and tennis ball trajectories, pose estimation, and unpaired image-to-image translation to create a realistic controllable tennis match video between any players you wish!

Now the next step is to see if that fits in a game… Imagine the possibilities. A controllable or gameplay-driven Red Alert cutscene anyone?

Causality the Fix for Deep Learning?

AI godfather and Turing award recipient Yoshua Bengio has said that deep learning needs to be fixed. He believes that until AI can go beyond pattern recognition and learn more about cause and effect, we won’t be delivering a true AI revolution.

His argument is that most ML applications utilise statistical techniques to explore correlations between variables. This requires that experimental conditions remain the same and that the trained ML system is applied on the same kind of data as the training data (domain specific/dependent).

For example when a doctor diagnoses a patient and recommends a particular course of treatment. This is not something that correlation-based ML systems were designed for. Once a change in policy is made, the relationship between the input and output variables will differ from the training data, reducing the accuracy of the system.

Causal inference explicitly addresses this issue and Bengio and other pioneers in the field like Judea Pearl believe that this will be a powerful way to allow ML systems to generalize better, be more robust and result in more explainable decision-making.

Bengio and his students have published some initial work in that direction.