Part 2 of our roundup featuring the latest and greatest AI advancements and directions from 2020. We cover the global shortage of AI talent, Graph Neural Networks being the hotest research area and a new Macbook for machine learning.

AI Talent in Shortage, in High Demand byt not Pandemic Proof

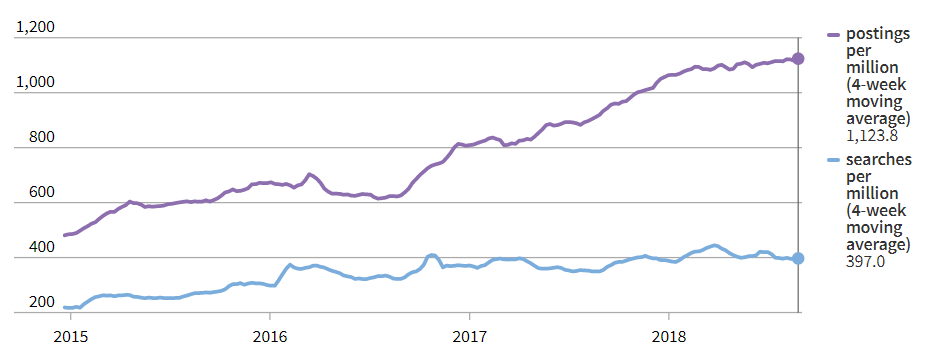

As companies around the world started to embrace AI in 2017 to 2019 and aggressively hire AI talent, demand outstripped supply from universities and institutions of higher learning.

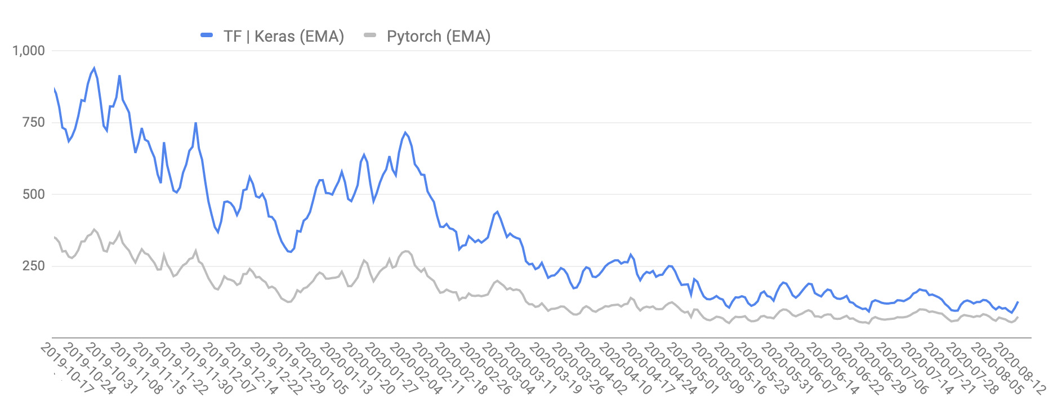

However, while the market was still hot in 2020, companies started reigning in spending due to the pandemic and public job postings on LinkedIn that mention a deep learning framework, which were initially on a strong early 2020 ramp up, took a hit due to COVID-19 since February 2020.

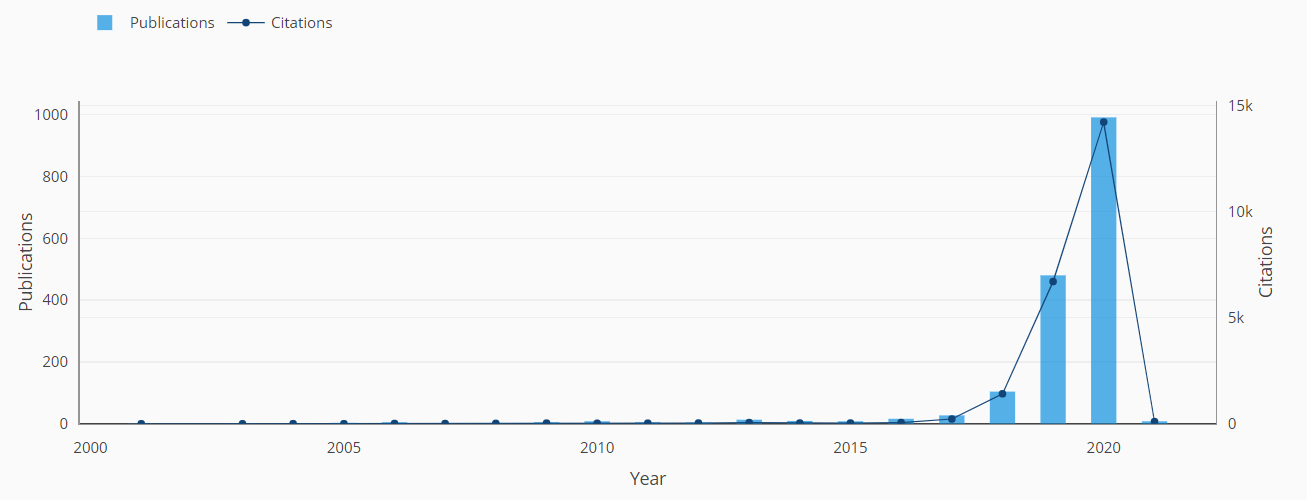

Are Graph Neural Networks the Hottest Research Area?

The top machine learning and AI conferences this year, NeurIPS, ICML, ICLR were awash with papers on Graph Neural Networks.

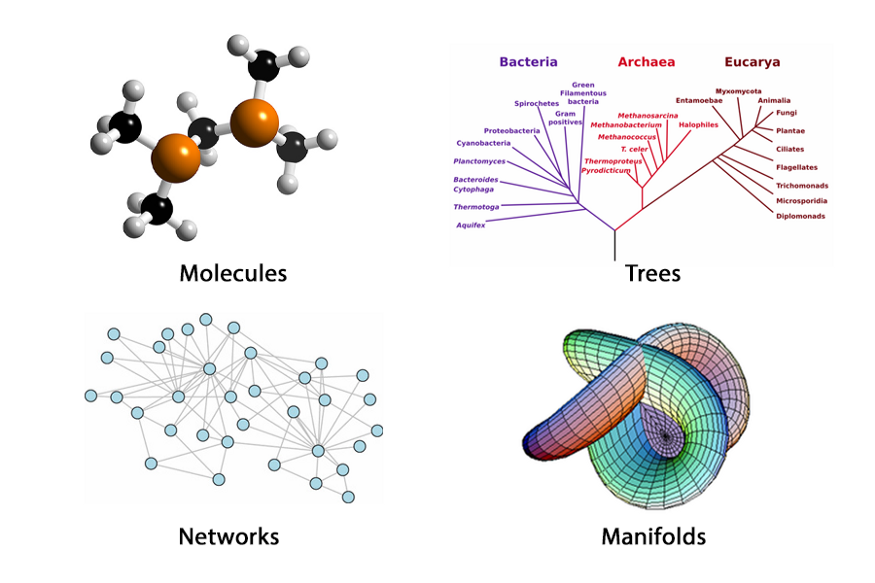

To understand this surge in popularity, we should perhaps consider GNNs as a subset or a means for Geometric Deep Learning. A vast majority of deep learning is performed on Euclidean data. This includes datatypes in 1D and 2D domains. We however exist in a 3D world and there is an argument thar our data should reflect that. As the community searches for new use cases, new models and new breakthroughs - non-euclidean data and GNNs have gained popularity.

This introduction to Geometric Deep Learning by Flawnson Tong is a pretty good read.

If you prefer pouring over hundreds of papers in the area (or a few survey papers), this list of resources on GNNs is as good as any.

Benchmarks of Machine Learning Workloads on the new Macbook M1 Chip Are Looking Strong

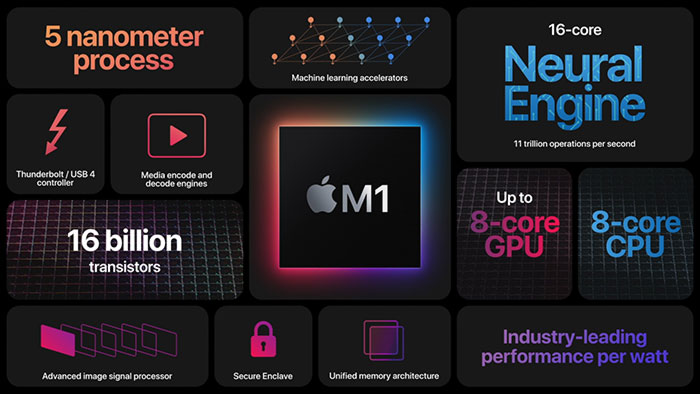

Apple’s new Macbook “armed” (pun-intended) with their new M1 chips have so much going for them. Great performance on both native (ARM) and virtualized (x86) workloads, crazy (for laptop) battery life and fanless awesomeness (for the Macbook Air).

Early machine learning performance benchmarks are also showing excellent performance. 3.6x better vs their Intel CPU and AMD Radeon GPU counterparts on fitting an object detection model with Apple’s CreateML.

What we really want to (and should soon) see is how it performs against Nvidia’s amazing new Ampere cards.